Test report

Evaluate, validate and certify GNSS performanceWHAT IS A TEST REPORT?

A geolocation test report is the deliverable issued by a test laboratory to the client at the end of the certification process, which includes the following stages:

- Identification of the Devices Under Test (DUT),

- Definition of test scenarios,

- Perform of measurements,

- Data analysis,

- Summary presentation of results.

The test report describes GNSS performance measured with the Device Under Test. The results are contextualized concerning the selected test scenario for its representativeness to the targeted use cases. Upon request, the measurements will be accompanied by graphics illustrating the statistical distributions of the collected data and videos highlighting the place of the measurements.

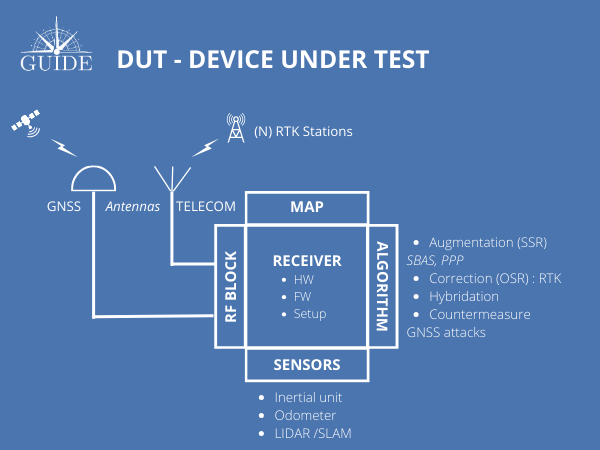

Device Under Test (DUT)

The DUT is the product, software, or service tested to assess its performance.

It may be one or a set of components:

- GNSS antenna

- GNSS receiver

- data processing and/or data fusion algorithms

- assistance services (SBAS, RTK, PPP, …)

- proprioceptive or exteroceptive sensors

- GNSS map

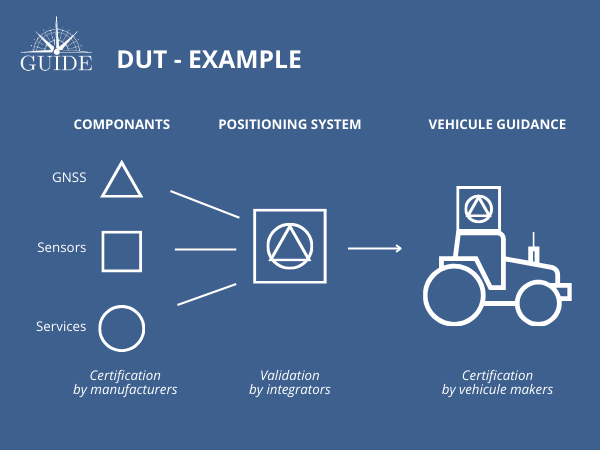

A DUT can be broken down into a set of sub-components that can be evaluated separately before being integrated into a positioning system.

Once the system has been designed, several series of tests are required to characterize its performance about operational constraints. Initial assessments are performed in controlled environments, where constraints are considered individually.

On the other hand, the latest tests are performed under the worst possible conditions, combining several disturbances to test the limits of the solution under test.

For example, if a receiver is submitted to a jamming signal, its countermeasures system may be very effective, until the test scenario adds disturbance, such as passing under a bridge or canopy. This affects the results for the rest of the route.

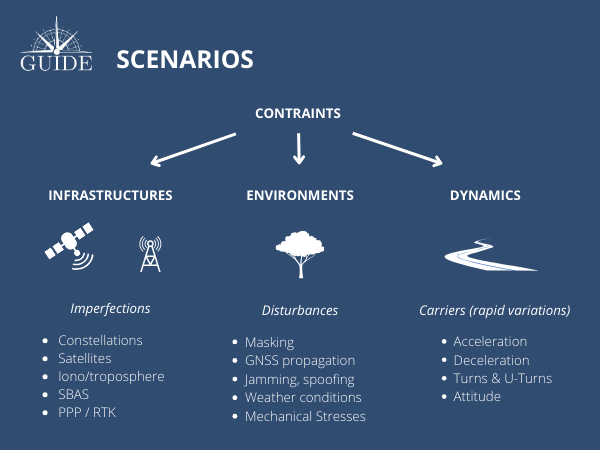

TEST scenarios

A test scenario aims to reconstitute the typical conditions of use of the DUT. Thus, performance is conditioned to the usage contexts of the DUT to obtain an objective and sincere analysis.

The test conditions must notably simultaneously consider the three main types of error sources:

- the imperfections of “GNSS infrastructures”

- the disturbances caused by the “Local Environment”

- and the “Dynamics” applied to the DUT

GNSS infrastructures refer to:

- the constellations with their satellites

- the atmospheric layers

- the ground stations

- and all data services likely to impact performance

Local environments refer to all constraints likely to cause accuracy errors

- GNSS signals can be masked, attenuated, diffracted, and reflected by nearby buildings, tree canopies, or topographies. (e.g. urban, semi-urban, rural, etc.).

- Unexpected signals can interfere with those of the GNSS system, causing measurement errors and even denial of service.

- Ambient conditions can also be a source of errors when the terminal is subjected to extreme temperatures or vibratory phenomena.

Dynamics refer to the conditions under which the GNSS terminal moves. In this case, GNSS measurements are sensitive to rapid variations in speeds and trajectories (turns, roundabouts, etc.).

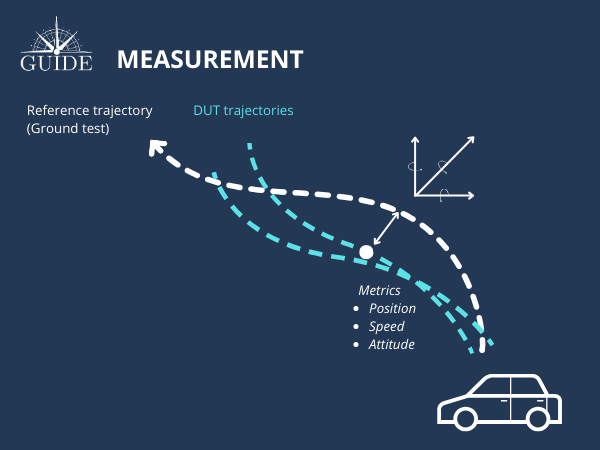

Test measurements

Accuracy performances are mostly expressed in relative measurements compared to the true trajectory followed by the vehicle carrying the DUT. This reference trajectory, also known as ground truth, must be given along with the estimation of its uncertainties inherent in the metrology instrumentation used. The more accurate this instrumentation is, the smaller the uncertainties will be.

Accuracy performance is based on three primary metrics:

- Positioning

- Speed

- Attitudes (yaw, roll, and pitch angle measurements)

Other quantities can also be issued by them through calculations, such as:

- Realignment time to the nominal performance

- Error self-estimation or protection level

- Acceleration/Deceleration

- Rotational speed

TEST DATA ANALYSIS

The measurement analyses allow us to observe the discrepancies between the true trajectory and that of the DUT under consideration, which itself can be compared with a second product.

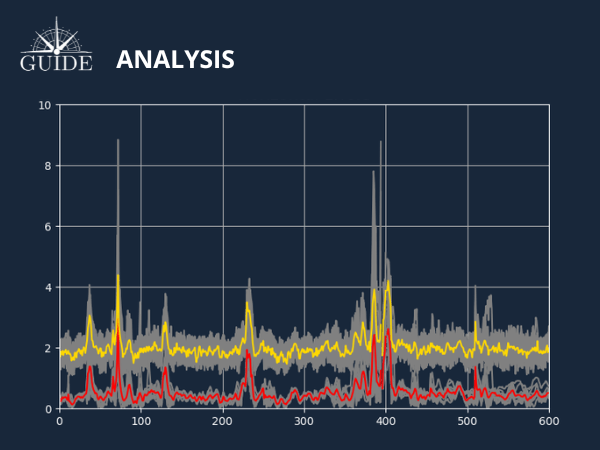

A first level of measurement is necessary to analyze the temporal/distance domain to provide a synthetic and intuitive view of the behavior of the DUT. In particular, error peaks and convergence times give a first idea of the performance.

A second level of analysis is required to provide measurements that can be used to characterize accuracy performance.

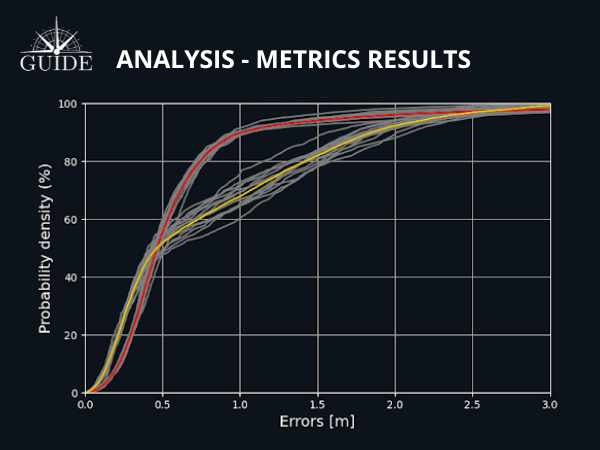

European standard EN16803-1 recommends distributing the measurement tribes with a function accumulating the deviations to obtain a probabilistic performance density.

This function, known as the “Cumulative Distribution Function” (CDF), indicates the performance of a given DUT on the selected metrics. For a given scenario, the CDF related to a DUT will always be the same. To integrate “trueness” and “precision” errors, several measurements are required. The average of the measurement errors at each point in time then determines the performance curve of the DUT.

If this curve is projected onto a graph with that of a second DUT, it becomes easy to identify the best-performing product for the scenario under consideration.

The EN16803-1 standard proposes to retain three points of comparison for 50%, 75%, and 95% of the best measurements.

test results

Depending on the destination of the test results, their presentations differ.

To understand and interpret the results for the different test configurations, the client needs a comprehensive test report with measurement distributions in the time and distance domains, as well as the CDF curve if required.

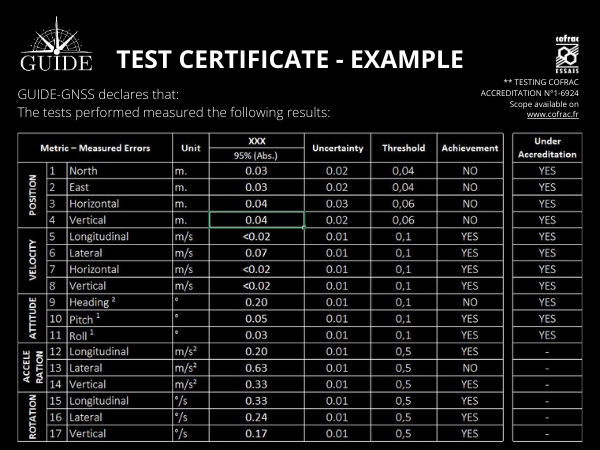

On the other hand, to certify test results, clients need to know whether measurements comply with pre-established thresholds in their field of activity. The results are then presented in metric grids only, comparing the measurements observed with those required to achieve the claimed performance.

Performance criteria then become a key factor in deciding whether or not to issue certification marks.

The results and their interpretation are presented synthetically, in table form, and make it possible to evaluate, validate, and certify the performance of study objects derived from GNSS technology.

Discover other aspects of GNSS/GPS metrology